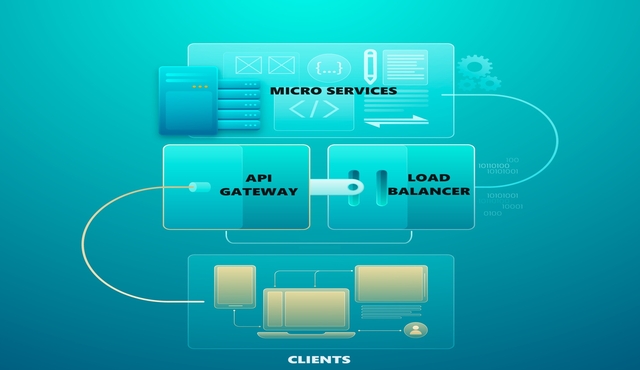

In today’s rapidly evolving landscape of microservices architecture, the API Gateway pattern emerges as a crucial component for building scalable, resilient, and secure distributed systems. Serving as a central entry point for client requests, the API Gateway acts as a reverse proxy that efficiently manages, routes, and secures traffic between clients and the underlying microservices. By encapsulating various cross-cutting concerns such as authentication, authorization, rate limiting, and protocol translation, API Gateways streamline client interactions, enhance security, and simplify the complexities of microservices communication. In this blog, we delve into the intricacies of the API Gateway pattern, exploring its benefits, challenges, and best practices for designing and implementing robust API Gateway solutions.

API Gateway

The API Gateway pattern acts as a single entry point for client requests, routing them to the appropriate microservices and simplifying the interaction between clients and the underlying microservices.

By consolidating multiple API endpoints into a unified interface, the API Gateway enables clients to access various functionalities without needing to know the internal structure of the microservices ecosystem. Additionally, the API Gateway can handle cross-cutting concerns such as authentication, authorization, rate limiting, and request aggregation, offloading these responsibilities from individual microservices. This centralized approach enhances security, improves performance, and facilitates easier management of client interactions. However, it’s essential to carefully design and implement the API Gateway to avoid becoming a single point of failure. By distributing and scaling the API Gateway as needed and implementing robust error handling mechanisms, organizations can ensure its reliability and resilience in supporting complex microservices architectures. Overall, the API Gateway design pattern plays a pivotal role in streamlining client-server communication and promoting agility and flexibility in microservices-based systems.

Advantages:

1. Unified API Interface:

The API Gateway consolidates multiple microservices into a single entry point, providing clients with a unified API interface. This simplifies client interactions, optimizes client communication with backend services and reduces the implementation complexity of the underlying microservices architecture.

API Gateways translate and transform requests and responses, and provide clients with a unified and consistent interface. This approach simplifies client interactions, optimizes communication with backend services, and reduces implementation complexity, ultimately improving the overall efficiency and maintainability of microservices architectures.

1.1. Service Aggregation:

API Gateways aggregate multiple microservices into a single entry point by exposing a unified set of APIs that encapsulate the functionalities provided by the underlying microservices. The gateway acts as a front, abstracting the complexities of the individual services and presenting clients with a unified and consistent API surface.

1.1.1. Simplified Client Interactions:

Clients interact with the API Gateway using a single interface, regardless of the number or complexity of the underlying microservices. This reduces the cognitive burden on clients, as they only need to understand and integrate with one API.

1.1.2. Optimized Communication:

By consolidating requests from clients and routing them to the appropriate backend services, the API Gateway optimizes communication pathways, minimizing the number of network hops and reducing latency.

1.1.3. Reduced Implementation Complexity:

From the client’s perspective, interacting with a unified API interface provided by the gateway simplifies development efforts, as clients do not need to manage multiple endpoints or understand the details of underlying microservices. Similarly, on the backend, the gateway abstracts away service-specific logic, allowing developers to focus on implementing business logic without worrying about exposing or managing APIs.

1.2. Protocol Translation and Transformation:

API Gateways can translate and transform requests and responses between different protocols and data formats, ensuring compatibility between clients and backend services. For example, the gateway can accept RESTful HTTP requests from clients and translate them into gRPC calls or message queue requests understood by backend services.

1.2.1. Simplified Client Interactions:

Clients can communicate with the API Gateway using their preferred protocols and data formats, without needing to conform to the specific requirements of individual services. This flexibility improves interoperability and reduces friction in client-server communication.

1.2.2. Optimized Communication:

By standardizing communication protocols and data formats, the API Gateway streamlines data transmission and reduces protocol overhead, leading to more efficient communication between clients and services.

1.2.3. Reduced Implementation Complexity:

Developers can focus on implementing business logic within the backend services without needing to worry about protocol compatibility or data format conversions. The API Gateway handles these concerns transparently, simplifying development efforts and reducing the risk of integration errors.

2. Cross-Cutting Concerns:

The API Gateways can handle cross-cutting concerns such as authentication, authorization, rate limiting, and request aggregation. They implement authentication, authorization, rate limiting, and request aggregation to enhance security, performance, and maintainability in microservices architectures. By centralizing these cross-cutting concerns within the gateway, organizations can enforce consistent policies, protect against security threats, optimize resource usage, improve performance and streamline client-server communication, ultimately improving the overall quality and reliability of their systems.

2.1. Authentication:

API Gateways authenticate incoming requests by validating credentials, such as API keys, tokens, or user credentials, against an identity provider or authentication service. Once authenticated, the API Gateway attaches identity information e.g., user ID, roles to the request, allowing downstream services to make access control decisions based on the user’s identity. This improves security by ensuring that only authenticated users or clients can access protected resources, preventing unauthorized access to sensitive data or functionalities.

2.2. Authorization:

After authentication, the API Gateways enforce access control policies to determine whether the authenticated user or client has permission to perform the requested action. Access control policies can be based on various factors, such as user roles, scopes, or attributes, and are typically defined and managed centrally within the API Gateway. This enhances security by restricting access to resources based on predefined rules, preventing unauthorized users from accessing or modifying sensitive data or functionalities.

2.3. Rate Limiting:

API Gateways enforce rate limits on incoming requests to prevent abuse, denial-of-service attacks, or excessive resource consumption. Rate limits can be configured based on criteria such as the number of requests per second, per minute, or per user, and can be applied globally or per API endpoint. This improves security by mitigating the risk of service disruptions or performance degradation caused by excessive traffic or malicious behavior. This also enhances performance by ensuring that resources are allocated efficiently and fairly among users or clients, preventing any single user or client from monopolizing resources.

2.4. Request Aggregation:

API Gateways aggregate multiple incoming requests into a single request before forwarding them to backend services. Aggregation can involve combining related requests e.g., fetching data from multiple microservices in a single call or merging redundant requests e.g., deduplicate identical requests. This improves performance by reducing the number of round-trips between clients and services, minimizing network latency, and optimizing resource utilization. It also enhances maintainability by simplifying client interactions and reducing the complexity of backend service logic, leading to cleaner, more modular code and easier troubleshooting and debugging.

3. Improved Performance:

API Gateways can play a vital role in improving performance of microservices based applications. They can improve performance by caching frequently requested data, reducing the number of round-trips between clients and services, balancing the load between services and implementing fault tolerance. They optimize data transmission by aggregating and compressing responses, minimizing the network overhead. Additionally, API Gateways can offload cross-cutting concerns such as authentication and authorization, allowing backend services to focus on business logic. By implementing load balancing and routing strategies, API Gateways distribute requests evenly across backend instances, optimizing resource utilization. API Gateways can also implement protocol and data format optimizations to streamline communication and reduce processing time.

3.1. Response Aggregation & Caching:

By aggregating and caching responses, the API Gateway can improve the overall performance of the system. It reduces latency by minimizing the number of network round-trips required for client requests and optimizing data transmission.

3.2. Load Balancing:

API Gateways can play a significant role in load balancing. They can distribute incoming client requests across multiple instances of backend services to ensure that the load is evenly distributed and no single instance is overwhelmed. This helps improve the overall performance, scalability, and reliability of the system by preventing any individual service from becoming a bottleneck. API Gateways can use various load-balancing algorithms such as round-robin, least connections, or weighted distribution to allocate requests to backend services based on factors like server health, available resources, and current workload.

3.3. Fault Tolerance:

API Gateways can detect failures or errors in backend services and implement fault tolerance mechanisms to mitigate their impact. This may involve rerouting requests away from failed or unhealthy services to healthy ones, implementing retry mechanisms to resend failed requests, or providing fallback responses when necessary. By monitoring the health and availability of backend services, API Gateways can dynamically adjust their routing and load-balancing strategies to maintain system reliability and minimize downtime. Additionally, API Gateways can implement circuit-breaking mechanisms to isolate and contain failures, preventing them from cascading throughout the system and causing widespread disruption.

4. Scalability:

The API Gateway can be scaled independently of the underlying microservices, allowing organizations to handle increased client traffic effectively. Horizontal scaling techniques such as load balancing and clustering ensure that the API Gateway can accommodate growing needs.

By deploying multiple instances of the gateway, distributing traffic evenly across those instances, and ensuring high availability through clustering, organizations can effectively handle increased client traffic and accommodate growing needs in their microservices architectures.

4.1. Horizontal Scaling:

API Gateways can be horizontally scaled by adding more instances or replicas to handle increased client traffic. This involves deploying multiple instances of the API Gateway across multiple servers or containers. Each instance of the API Gateway is identical and independent, allowing them to share the incoming client load and distribute traffic evenly. Horizontal scaling ensures that the API Gateway can handle growing needs by adding additional capacity as required, without impacting the performance or availability of the system.

4.2. Load Balancing:

API Gateways use load balancing techniques to distribute incoming client requests across multiple instances of the gateway. Load balancers are deployed in front of the API Gateway instances to evenly distribute the traffic.

Load balancers can use various algorithms, such as round-robin, least connections, or weighted distribution, to route requests to the least loaded instance of the API Gateway. By spreading the workload across multiple instances, load balancing ensures optimal resource utilization and prevents any single instance from becoming a bottleneck.

4.3. Clustering:

API Gateways can be deployed in a clustered configuration, where multiple instances form a cluster and work together to handle client requests. Clustering enables seamless failover and high availability by ensuring that if one instance of the API Gateway fails, the remaining instances can continue to serve client requests.

Clustering also improves scalability by allowing new instances to be added to the cluster dynamically, without disrupting ongoing operations. Additionally, clustering facilitates centralized management and configuration of multiple API Gateway instances, simplifying administration and maintenance tasks.

5. Protocols and Data Format Conversions:

API Gateways can convert requests and responses between different protocols and data formats. One of the key features of an API Gateway is its ability to act as a mediator between clients and microservices, translating requests and responses as needed to facilitate communication. API Gateways play a crucial role in facilitating interoperability between clients and microservices by handling protocol and data format conversion smoothly. This capability enables organizations to build flexible and scalable architectures that can accommodate diverse communication requirements across different systems and technologies.

5.1. Protocol Conversions:

API Gateways can accept requests from clients using one protocol (e.g., HTTP/1.1, HTTP/2, WebSockets) and translate them into another protocol understood by the microservices (e.g., gRPC, MQTT, AMQP). This allows clients to communicate with the API Gateway using their preferred protocol while enabling perfect integration with backend services using different protocols.

5.2. Data Format Conversions:

API Gateways can also convert data formats between clients and microservices. For example, a client may send a request in JSON format, but the backend microservice expects data in XML format. The API Gateway can parse the incoming request, transform the JSON payload into XML format, and forward it to the microservice. Similarly, the API Gateway can convert the response from the microservice back into the format required by the client before sending it back to the client.

5.3. Content Negotiation:

API Gateways support content negotiation, allowing clients to specify their preferred data formats (e.g., JSON, XML, Protobuf) using HTTP headers such as Accept and Content-Type. The API Gateway can examine these headers and ensure that the request and response payloads are converted to the appropriate format based on the client’s preferences and the capabilities of the microservices.

5.4. Message Transformation:

In addition to simple data format conversion, API Gateways can perform more complex message transformations, such as filtering, enrichment, and aggregation. This enables the API Gateway to modify or enhance the content of requests and responses as they pass through, providing additional value-added services to clients and microservices.

5.4.1. Filtering:

API Gateways can filter incoming requests or outgoing responses based on specific criteria. For example, the gateway may only allow requests with certain headers or parameters to pass through, while blocking others. Similarly, responses from backend services can be filtered to include only relevant data before sending them back to the client. This filtering capability helps ensure that only necessary information is exchanged between clients and services, improving efficiency and security.

5.4.2. Enrichment:

API Gateways can enrich messages by adding additional data, content or context to requests or responses. For instance, the gateway may append user authentication information, such as user roles or permissions, to requests before forwarding them to backend services. Similarly, responses from the services can be enriched with metadata or additional attributes to provide clients with more comprehensive information. Enrichment enhances the value of messages exchanged within the system, enabling better-informed decisions and actions.

5.4.3. Aggregation:

API Gateways can aggregate data from multiple sources or services into a single response to fulfill client requests. This capability is particularly useful in microservices architectures where a single client request may require data from multiple backend services. The gateway can manage calls to different services, collect the results, and combine them into a unified response. Aggregation simplifies client interactions by reducing the number of round-trips and consolidating information into an organized format.

6. Logging and Monitoring:

Centralized logging and monitoring capabilities provided by the API Gateway enable organizations to track and analyze incoming requests, responses, and traffic patterns. By analyzing this data in real-time and integrating with logging and monitoring solutions, organizations can enhance troubleshooting, optimize performance, and ensure compliance with regulatory requirements.

6.1. Request and Response Logging:

API Gateways log details of incoming requests and outgoing responses, including metadata such as request method, URL, headers, parameters, response status code, and payload. These logs capture valuable information about client interactions with the gateway, enabling organizations to understand the flow of requests through the system and diagnose issues related to request processing or response generation.

6.2. Traffic Analysis:

API Gateways collect and aggregate data about incoming traffic, including request rates, response times, error rates, and throughput. By analyzing traffic patterns and performance metrics, organizations can identify trends, anomalies, and potential bottlenecks in the system, allowing them to optimize resource allocation and improve overall system efficiency.

6.3. Real-Time Monitoring:

API Gateways provide real-time monitoring dashboards and metrics visualization tools that display key performance indicators (KPIs) and metrics related to request processing, response times, and system health. These monitoring tools allow administrators and developers to monitor the health and performance of the gateway in real-time, detect issues or anomalies, and take proactive measures to address them before they impact users or services.

6.4. Alerts and Notifications:

API Gateways support alerts and notifications mechanisms that trigger alerts when predefined thresholds or conditions are met, such as high error rates, latency spikes, or service outages.

Alerts can be sent via email, SMS, or integrated with third-party incident management systems, allowing teams to respond promptly to critical issues and minimize downtime or service disruptions.

6.5. Integration with Logging and Monitoring Solutions:

API Gateways often integrate with existing logging and monitoring solutions, such as Elasticsearch, Logstash, Kibana (ELK stack), Prometheus, Grafana, or commercial APM (Application Performance Monitoring) tools. These integrations allow organizations to centralize logs and metrics from multiple sources, correlate data across different components of the system, and gain deeper insights into system behavior and performance.

Disadvantages:

1. Single Point of Failure:

The API Gateway represents a single point of entry for client requests, making it a potential single point of failure. If the API Gateway experiences downtime or becomes overwhelmed with traffic, it can disrupt the entire system’s availability.

To mitigate the risk of a single point of failure associated with the API Gateway, organizations can implement several strategies.

1.1. High Availability Architecture:

1.1.1 Deploy API Gateways in a high availability configuration with redundant instances distributed across multiple servers or data centers.

1.1.2 Use load balancers or clustering techniques to distribute incoming traffic across multiple instances of the API Gateway.

1.1.3 Implement failover mechanisms to ensure that if one instance of the gateway fails, traffic can be seamlessly redirected to healthy instances without impacting service availability.

1.2. Fault-Tolerant Design:

1.2.1 Design API Gateways to be fault-tolerant by incorporating redundancy and failover mechanisms at every layer of the architecture

1.2.2Use redundant components, such as network interfaces, servers, and storage, to minimize the impact of hardware failures

1.2.3 Implement health checks and monitoring tools to detect failures or performance issues in real-time and trigger automated recovery processes

1.3. Global Load Balancing:

Use global load balancing techniques to distribute client traffic across multiple geographically dispersed instances of the API Gateway. By spreading traffic across multiple regions or data centers, organizations can minimize the risk of downtime due to regional outages or network disruptions

1.4. Redundant Data Centers:

1.4.1 Deploy API Gateways in redundant data centers or availability zones to ensure high availability and fault tolerance

1.4.2 Distribute infrastructure across multiple physical locations to minimize the impact of localized failures, such as power outages or natural disasters

1.5. Traffic Management and Routing Policies:

1.5.1 Implement intelligent traffic management and routing policies to dynamically adjust routing decisions based on factors such as server health, network latency, and geographic proximity

1.5.2 Use DNS-based traffic management solutions or content delivery networks (CDNs) to route traffic to the closest and healthiest instances of the API Gateway

1.6. Disaster Recovery Planning:

1.6.1 Develop comprehensive disaster recovery plans that outline procedures for recovering from catastrophic failures or widespread outages

1.6.2 Regularly test disaster recovery mechanisms to ensure they can effectively restore service in the event of a major incident

By implementing these strategies, organizations can minimize the risk of a single point of failure associated with the API Gateway and ensure high availability, fault tolerance, and resilience in their systems.

2. Increased Complexity:

Implementing and maintaining an API Gateway adds complexity to the system architecture. Organizations need to carefully design and configure the API Gateway to handle various use cases, manage routing rules, and ensure fault tolerance and scalability.

2.1. Complexities:

2.1.1. Additional Layer of Abstraction:

API Gateways introduce an additional layer of abstraction between clients and backend services, which can complicate the overall system architecture. Managing this additional layer requires careful consideration of routing rules, request transformations, and integration with backend services, adding complexity to the system design.

2.1.2. Configuration Overhead:

Configuring and managing an API Gateway involves defining routing rules, authentication and authorization policies, rate limiting rules, and other configurations. As the complexity of the system grows, managing these configurations becomes more challenging, leading to increased overhead and potential for errors.

2.1.3. Inter-Service Communication:

API Gateways mediate communication between clients and backend services, as well as between different microservices within the system. Ensuring seamless communication between these components, handling retries, timeouts, and error handling, can add complexity to the architecture.

2.1.4. Scalability and Fault Tolerance:

Ensuring that the API Gateway is scalable and fault-tolerant requires additional considerations, such as deploying multiple instances, configuring load balancers, and implementing failover mechanisms. Managing the scalability and fault tolerance of the gateway adds complexity to the system architecture.

To handle the increased complexity introduced by an API Gateway, organizations can adopt the following strategies:

2.2. Strategies to Handle Complexities:

2.2.1. Clear Design and Documentation:

2.2.1.1 Develop a clear design and documentation for the API Gateway, outlining its purpose, functionality, and configuration options.

2.2.1.2 Document routing rules, authentication policies, and other configurations to ensure consistency and clarity for developers and administrators.

2.2.2. Automation and Orchestration:

2.2.2.1 Use automation and orchestration tools to streamline the deployment and management of the API Gateway.

2.2.2.2 Implement infrastructure-as-code practices to define and manage the configuration of the gateway, enabling automated provisioning and configuration management.

2.2.3. Monitoring and Alerts:

2.2.3.1 Implement robust monitoring and alerts mechanisms to track the health, performance, and availability of the API Gateway.

2.2.3.2 Monitor key metrics such as request rates, response times, error rates, and system resource utilization to identify potential issues and take proactive measures to address them.

2.2.4. Testing and Validation:

2.2.4.1 Perform thorough testing and validation of the API Gateway configuration and functionality to identify and address any potential issues or misconfigurations.

2.2.4.2 Implement continuous integration and continuous deployment (CI/CD) pipelines to automate testing and validation processes, ensuring that changes to the gateway are thoroughly tested before deployment.

2.2.5. Training and Skill Development:

2.2.5.1 Provide training and skill development opportunities for developers and administrators responsible for managing the API Gateway.

2.2.5.2 Ensure that team members have the necessary knowledge and expertise to design, configure, and maintain the gateway effectively.

By adopting these strategies, organizations can effectively handle the increased complexity introduced by an API Gateway and ensure that it remains a reliable and efficient component of their system architecture.

3. Performance Bottleneck:

In high-traffic scenarios, the API Gateway can become a performance bottleneck, especially if it’s responsible for processing and forwarding a large volume of requests. In such situations, the API Gateway may struggle to handle the volume of incoming requests, leading to increased response times, degraded performance, or even service outages. This bottleneck can occur if the gateway is responsible for processing and forwarding a large number of requests to backend services, leading to resource contention, increased latency, and reduced throughput.

3.1. Factors Contributing to Bottleneck:

Several factors can contribute to the API Gateway becoming a performance bottleneck:

3.1.1. Inefficient Request Processing:

Complex request processing logic or excessive request transformations can consume CPU and memory resources, slowing down the gateway’s response times

3.1.2. Overloaded Backend Services:

If backend services cannot handle the volume of requests forwarded by the gateway, the gateway may become overwhelmed with incoming traffic, leading to congestion and performance degradation

3.1.3. Inadequate Scaling:

If the API Gateway is not scaled appropriately to handle increasing traffic, it may become overloaded during peak periods, resulting in bottlenecks and service disruptions

3.2. Mitigation Strategies:

To mitigate the risk of the API Gateway becoming a performance bottleneck, organizations can implement the following strategies:

3.2.1. Optimize Request Processing:

Streamline request processing logic and minimize unnecessary request transformations to reduce CPU and memory overhead

3.2.2. Cache Frequently Accessed Data:

Implement caching mechanisms to cache responses from backend services, reducing the need for repeated requests and improving response times

3.2.3. Implement Rate Limiting:

Enforce rate limiting policies to throttle incoming requests and prevent the gateway from being overwhelmed with excessive traffic

3.2.4. Horizontal Scaling:

Scale the API Gateway horizontally by deploying multiple instances and distributing incoming traffic across them using load balancers or clustering techniques

3.2.5. Offload Workload to Backend Services:

Offload computationally intensive tasks, such as data processing or business logic, to backend services, reducing the load on the gateway

3.2.6. Monitor and Tune Performance:

Regularly monitor performance metrics such as response times, throughput, and resource utilization to identify performance bottlenecks and tune the gateway configuration accordingly

3.2.7. Implement Circuit Breaker Pattern:

Implement circuit breaker pattern to prevent cascading failures by temporarily halting requests to a failing or overloaded service, allowing it to recover and preventing the gateway from becoming overwhelmed

By implementing these mitigation strategies, organizations can optimize the performance and scalability of the API Gateway, reducing the risk of it becoming a bottleneck during high-traffic periods and ensuring reliable service delivery to clients.

4. Service Dependency:

The API Gateway relies on the availability and reliability of the underlying microservices to fulfill client requests and enforce policies effectively. If one or more microservices experience issues or downtime, it can impact the API Gateway’s ability to route requests, apply security policies, and provide accurate responses to clients.

4.1. Impact of Service Issues:

Service downtime or issues can manifest in several ways and impact the API Gateway:

4.1.1. Request Failures:

If a backend service is unavailable or experiencing issues, the API Gateway may fail to route requests to the affected service, resulting in request failures or timeouts for clients

4.1.2. Degraded Performance:

Slow response times or increased latency from backend services can impact the overall performance of the API Gateway, leading to delays in request processing and increased client wait times

4.1.3. Inconsistent Behavior:

Inconsistent behavior or errors from backend services can propagate to the API Gateway, resulting in unpredictable responses or behavior for clients

4.2. Mitigation Strategies:

To mitigate the impact of service dependency on the API Gateway, organizations can implement the following strategies:

4.2.1. Service Redundancy:

Implement redundancy and failover mechanisms for critical backend services to ensure high availability and fault tolerance. Deploy multiple instances of services across different availability zones or regions to minimize the impact of localized failures.

4.2.2. Health Checks and Monitoring:

Implement health checks and monitoring for backend services to detect issues or failures in real-time. Monitor key metrics such as response times, error rates, and service availability to identify potential issues before they impact clients.

4.2.3. Circuit Breaker Pattern:

Implement the circuit breaker pattern to prevent cascading failures by temporarily halting requests to a failing or overloaded service. The circuit breaker monitors the health of backend services and opens the circuit if the service becomes unavailable, redirecting traffic to alternative paths or providing fallback responses.

4.2.4. Retry and Timeout Policies:

Configure retry and timeout policies in the API Gateway to handle transient errors or timeouts from backend services. Implement exponential backoff strategies to gradually increase retry intervals and prevent overwhelming the service with repeated requests.

4.2.5. Fallback Mechanisms:

Implement fallback mechanisms to provide alternative responses or functionality when backend services are unavailable. Fallback responses can include cached data, static content, or default values to maintain service availability and provide a smooth experience for clients.

4.2.6. Graceful Degradation:

Design the API Gateway to gracefully degrade functionality or provide degraded service levels when backend services are unavailable or experiencing issues. Prioritize critical functionality and implement fallbacks for non-critical features to ensure essential services remain available to clients.

By implementing these mitigation strategies, organizations can reduce the impact of service dependency on the API Gateway and ensure reliable service delivery to clients even in the face of backend service issues or downtime.

5. Vendor Lock-in:

Vendor lock-in occurs when an organization becomes heavily dependent on a particular vendor’s product or service, making it difficult or costly to switch to an alternative solution.

In the case of API Gateways, vendor lock-in can arise from reliance on a specific implementation or service provider for API management capabilities. Organizations may face vendor lock-in if they use proprietary features or tightly integrated services offered by a specific API Gateway solution or cloud provider.

5.1. Challenges of Vendor Lock-in:

Vendor lock-in can pose several challenges for organizations:

5.1.1. Limited Flexibility:

Organizations may find themselves constrained by the capabilities and limitations of the chosen API Gateway solution, limiting their ability to adapt to changing business requirements or technology trends.

5.1.2. Higher Costs:

Switching to a different API Gateway solution or migrating away from a cloud-based service can incur significant costs in terms of reengineering, retraining, and data migration.

5.1.3. Disruption to Operations:

Transitioning to a new API Gateway solution or service provider can disrupt ongoing operations and workflows, leading to downtime, service interruptions, or degraded performance.

5.1.4. Dependency Risk:

Heavy reliance on a single vendor for critical API management functions can create dependency risks, leaving organizations vulnerable to changes in pricing, service levels, or vendor support.

5.2. Mitigation Strategies:

To mitigate the risks of vendor lock-in associated with API Gateways, organizations can adopt the following strategies:

5.2.1. Standardize Interfaces:

Design API contracts and interfaces in a vendor-agnostic manner, adhering to industry standards such as OpenAPI (formerly Swagger) or GraphQL. This approach ensures interoperability and facilitates migration to alternative API Gateway solutions or service providers.

5.2.2. Implement Abstraction Layers:

Introduce abstraction layers or facade patterns between client applications and the API Gateway to decouple client dependencies from specific implementation details. This abstraction layer shields clients from vendor-specific features or functionality, making it easier to switch API Gateway solutions or service providers.

5.2.3 Use Open Source Solutions:

Consider using open-source API Gateway solutions or self-hosted alternatives that offer greater flexibility and control over the infrastructure stack. Open-source solutions typically have lower barriers to entry and provide more freedom to customize and extend functionality.

5.2.4 Evaluate Multi-Cloud Strategies:

Embrace multi-cloud strategies by distributing workloads across multiple cloud providers or hybrid environments. This approach reduces reliance on a single vendor and mitigates the risk of vendor lock-in by allowing organizations to leverage services from different providers.

5.2.5 Negotiate Favorable Terms:

When negotiating contracts with API Gateway vendors or service providers, seek terms that provide flexibility and minimize lock-in risks. This may include provisions for data portability, interoperability standards, and exit strategies in case of vendor changes or service discontinuation.

5.2.6 Continuous Evaluation and Monitoring:

5.2.6.1 Continuously evaluate the API Gateway landscape and monitor developments in the market to identify alternative solutions or emerging trends that could reduce lock-in risks.

5.2.6.2 Regularly reassess the organization’s API Gateway strategy and vendor relationships to ensure alignment with business objectives, technology requirements, and evolving industry standards.

By implementing these mitigation strategies, organizations can reduce the risks of vendor lock-in associated with API Gateways and maintain flexibility, agility, and control over their API management infrastructure.

When to Select API Gateway:

API Gateway pattern can be chosen when dealing with multiple clients needing access to various microservices. For instance, in an e-commerce application, the API Gateway can manage requests for product catalogs, user authentication, customers order processing, inventory management, payment processing and shipping.

This design pattern is particularly suitable for scenarios where there are multiple microservices with diverse functionalities, each requiring a unified entry point for client communication. It’s a viable choice when there’s a need to simplify client interactions and inter-services communication by abstracting the complexity of the underlying microservices architecture. Additionally, the API Gateway pattern is beneficial when there are cross-cutting concerns such as authentication, authorization, rate limiting, and request aggregation that need to be managed centrally. Organizations looking to improve performance by aggregating and caching responses, as well as those aiming to scale their services independently of each other, can leverage the API Gateway design pattern effectively. Furthermore, when there’s a requirement for centralized logging, monitoring, and analytical capabilities, the API Gateway becomes a valuable component of the architecture. In summary, the API Gateway design pattern is best suited for microservices architectures that prioritize communication simplicity, security, performance optimization, and centralized management of client interactions.

Conclusion:

While the API Gateway design pattern offers significant benefits in terms of simplifying client-server communication and enforcing policies, organizations must carefully weigh these advantages against the potential drawbacks and design considerations specific to their use case.